The History of Eyeglasses: From Invention to Today

Welcome to the fascinating journey through the evolution of eyewear, where function meets fashion in the most visionary way! Over the centuries, eyeglasses have transcended mere vision correction tools; they’ve become iconic fashion statements. Join us as we explore the remarkable history of eyeglasses, from their rudimentary beginnings to today’s trendsetting styles.

The Blur Before Eyeglasses

In the annals of time, there was a time when everything was blurry because eyeglasses hadn’t graced the world yet. If you suffered from nearsightedness, farsightedness, or astigmatism, clear vision was an elusive dream.

It wasn’t until the late 13th century that corrective lenses emerged, albeit in a primitive form. Before that, people with imperfect vision had two choices: accept their blurry fate or get creative.

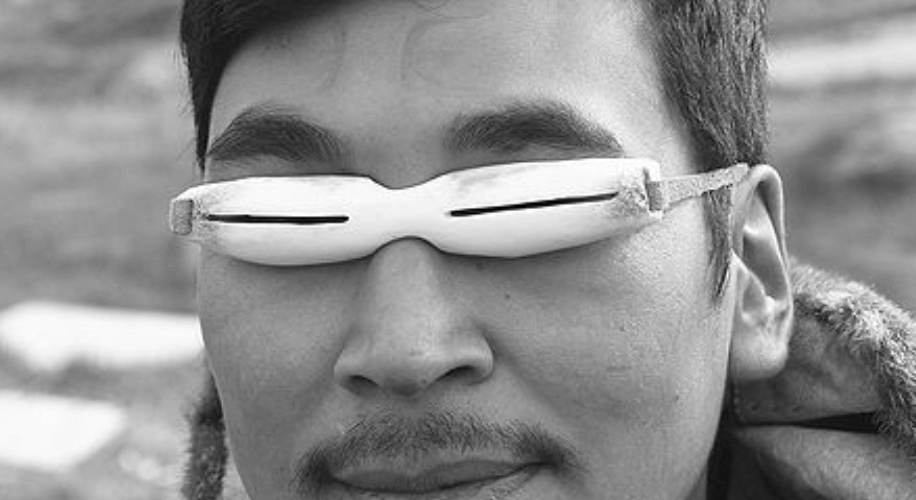

Innovation Through Improvisation

Our ingenious ancestors chose the latter. Prehistoric Inuits devised makeshift sunglasses using flattened walrus ivory to shield their eyes from the sun’s glare. In ancient Rome, Emperor Nero held polished emeralds before his eyes to combat sunlight’s harshness during gladiator spectacles. His tutor, Seneca, even read through a large glass bowl filled with water, an early form of magnification.

These innovations marked the beginning of corrective lenses, which evolved further in Venice around 1000 C.E. Seneca’s water-filled bowl gave way to flat-bottom, convex glass spheres that magnified text, known as “reading stones.” Monks used these to continue reading and illuminating manuscripts after hitting the age of 40.

When Were Eyeglasses Invented?

The exact date is a subject of debate, but it’s widely accepted that the first pair of corrective eyeglasses was born in Italy between 1268 and 1300. These early spectacles consisted of two magnifying lenses connected by a hinge, perched on the bridge of the nose.

The earliest depictions of this eyewear style can be found in paintings from the mid-14th century by Tommaso da Modena. Monks sported these early pince-nez (French for “pinch nose”) style glasses while reading and copying manuscripts.

Spreading Across Europe

From Italy, the art of eyeglasses spread across Europe, including the “Low” or “Benelux” countries, Germany, Spain, France, and England. These early glasses mainly featured convex lenses for magnification. In England, eyeglass makers began advertising reading glasses as a boon for those over 40. In 1629, the Worshipful Company of Spectacle Makers was established with the slogan: “A blessing to the aged.”

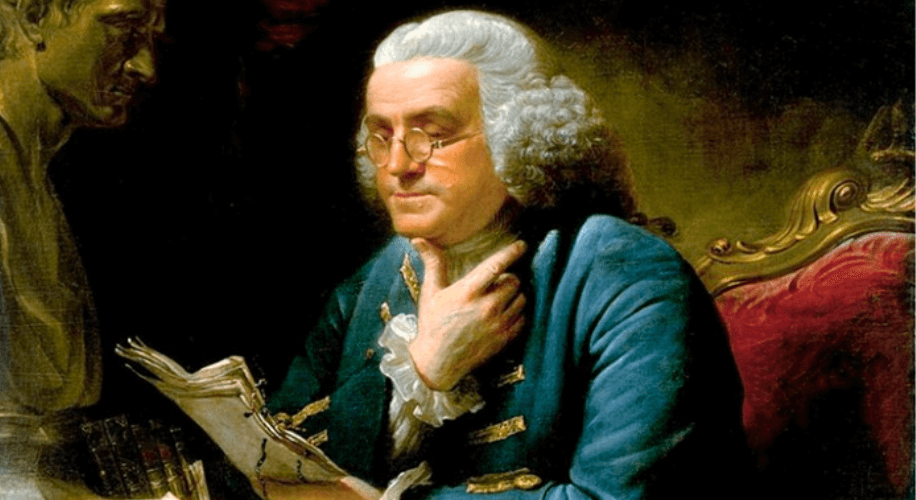

Seeing Beyond: The Invention of Bifocals

In the 18th century, significant developments occurred. Bifocal lenses, often attributed to Benjamin Franklin, emerged in England during the 1760s. These lenses combined distant and near-vision correction, a breakthrough for eyewear.

A Revolution in Eyeglass Design

One pivotal moment came in 1825 when English astronomer George Airy crafted concave cylindrical lenses for his nearsighted astigmatism. Trifocals followed suit in 1827. Around the same time, the monocle and lorgnette (eyeglasses on a stick) made their debuts, offering unique eyewear choices.

The Golden Age of Hollywood Eyewear

Fast-forward to the early 20th century when movie stars became trendsetters. Silent film star Harold Lloyd’s round tortoiseshell glasses set a new fashion standard. These glasses also reintroduced temple arms to the frame.

Innovations in Modern Times

Progressive lenses arrived in 1959, transforming eyeglass functionality. Today, most eyeglass lenses are crafted from lightweight, shatter-resistant plastic.

Photochromic lenses, which darken in sunlight and lighten indoors, first appeared in the late 1960s. They have since evolved into a spectrum of colors, offering style and sun protection.

Blue light glasses were developed in the 1960’s, but didn’t rise to fame until the 2000’s to combat the digital age’s new visual challenges, such as eye strain from screens.

The Cycle of Fashion

Eyeglass styles have evolved over the years, cycling through trends. Gold-rimmed and rimless glasses have had their moments. In the 1970s, oversized wire-framed glasses were in vogue. Now, retro styles like square, horn-rimmed, and brow-line glasses are reclaiming the optical spotlight.

As we celebrate the ever-evolving world of eyewear, from its origins to the latest trends, remember that you can explore a vast array of styles, from retro classics to cutting-edge designs, at Zenni Optical. Discover your unique eyewear statement today!

United States

United States